News

In a new study, scientists have made people remember things they've never seen with the help of AI

A study by scientists has unexpectedly found that if you show people high-quality fake video footage from movie remakes that never actually existed, the subjects begin to remember seeing them and even compare them to the originals.

This is stated in a study published in the journal PLOS One. Scientists believe that this proves how easy it is to "plant" memories of a person's life that never happened in their brain.

As part of the study, people were shown fragments of movies that were made with the help of deepfakes. Artificial intelligence replaced the actor's real face with the face of the actor provided by the authors of the study.

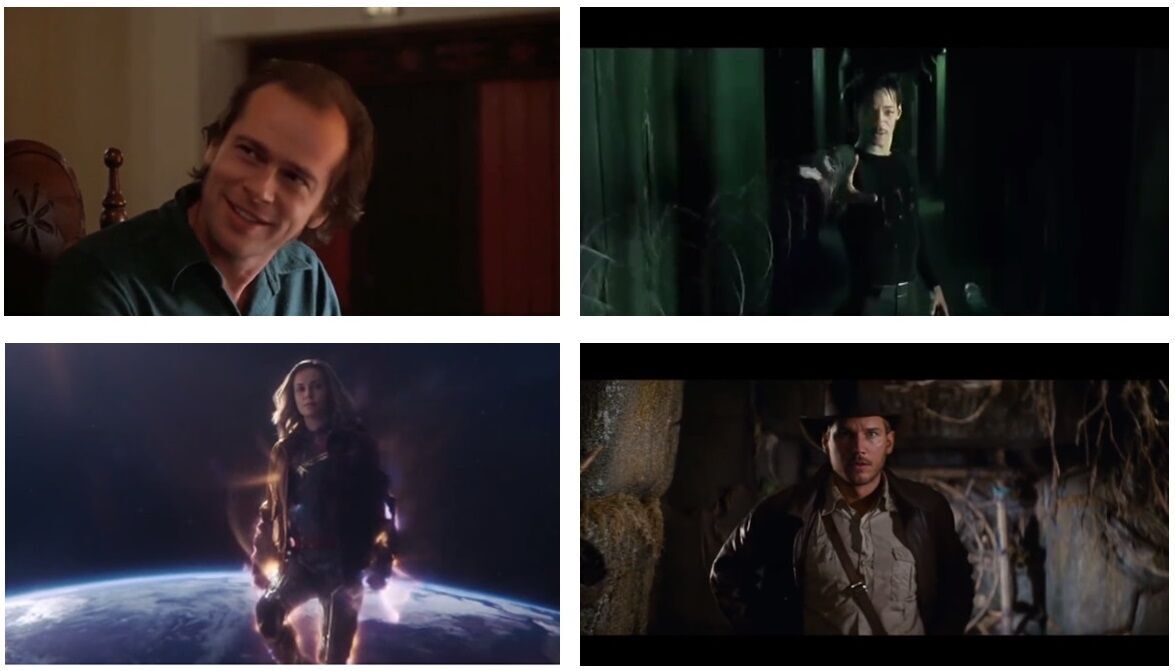

For example, 436 subjects were shown a non-existent reboot of The Matrix, in which the role of Neo was played not by Keanu Reeves but by Will Smith, who, by the way, was really his competitor during auditions for the role. In the The Shining were also shown, with Brad Pitt playing Jack Nicholson, Captain Marvel with Charlize Theron replacing Brie Larson, and Indiana Jones: Raiders of the Lost Ark with Chris Pratt instead of Harrison Ford.

It turned out that even short but well-made video clips were enough to make the subjects believe that they had seen the movie. Some of the participants in the experiment even began to recall what emotions they had experienced while watching the movie and whether it was better than the original.

At the same time, the authors of the experiment urge not to dramatize the results. In their opinion, it is possible to replace a person's memories not only through the use of the technology of the deepfake.

"Deepfakes proved to be no more effective in distorting memory than simple textual descriptions," the article says.

So, according to the scientists, the deepfakes are not absolutely necessary to trick someone into accepting false memories.

"We shouldn't jump to conclusions about a bleak future based on our fears of new technologies," said Gillian Murphy, lead author of the article and a disinformation researcher at University College Cork in Ireland.

In an interview with The Daily Beast, she acknowledged that there are very real harms from deepfakes, "but we should always gather evidence of those harms before rushing to solve problems we've only just realized exist."

On average, 49% of participants were deceived by fake videos, and another 41% of this group claimed that the Captain Marvel remake was better than the original.

"Our findings are not particularly worrisome as they do not indicate any unique threat posed by deepfakes compared to existing forms of disinformation," Murphy concluded.

Subscribe to OBOZREVATEL on Telegram, Viber and Threads channels to keep up with the latest developments.